Worlds gets $10 million to help enterprises observe their physical environments with AI

Worlds, whose AI helps large organizations observe their physical spaces to ensure security, safety, and productivity, has raised $10 million in a first round of funding.

The Dallas-based startup uses multiple cameras to track spaces in 3D over time, providing a more reliable view of what’s happening than typical 2D security video would. Worlds then trains its AI models to find unusual patterns within and across locations — and if a pattern is unusual enough, it alerts the company.

Most surveillance providers rely on rules-based systems, in which humans write the rules for what is considered unusual. But Worlds asserts that this doesn’t go far enough. “The humans writing the rules simply cannot keep up,” said Worlds cofounder and CEO Dave Copps. “With deep learning models, we are able to load videos into our system and make predictions immediately by leveraging prior learning. As the system learns from one environment, it can transfer that learning to other environments.”

The company is using reinforcement learning, a segment of AI that’s hot right now, to generate synthetic data so it can create its own training sets. It also uses generative adversarial networks, or algorithmic architectures that pit two neural networks against each other to generate new, synthetic instances of data.

Worlds’ initial customers include large oil and gas companies Chevron and Petronas, which require sophisticated monitoring of dozens of oil and gas locations, said Copps. Worlds is also targeting U.S. Department of Defense installations and plans to expand to serve retail, entertainment, and other industrial companies, Copps said.

As an example of the technology in action, an oil and gas company can use World’s software to track trucks driving in and out of an oil well property, note how full the trucks are, and proactively alert well operators if a truck arrives full instead of empty, thus posing a security risk. If a company has 100 such oil wells to watch, Worlds’ AI-driven system can track all of them automatically and transfer learnings between them. The surveillance technology can be applied to both open and closed spaces, Copps said.

The funding round, led by Align Capital, includes Chevron Technology Ventures, Piva, GPG Ventures, and Hypergiant Industries.

Worlds is a spinout of Hypergiant Sensory Sciences (HSS), a division of Austin, Texas-based big data and AI product development company Hypergiant Industries. HSS will remain as part of Hypergiant, and Hypergiant Industries will continue to be a key partner for Worlds, Copps said.

VentureBeat wrote about Copps’ cofounding of HSS in 2018. Copps previously built AI company Brainspace, which focused on natural language processing.

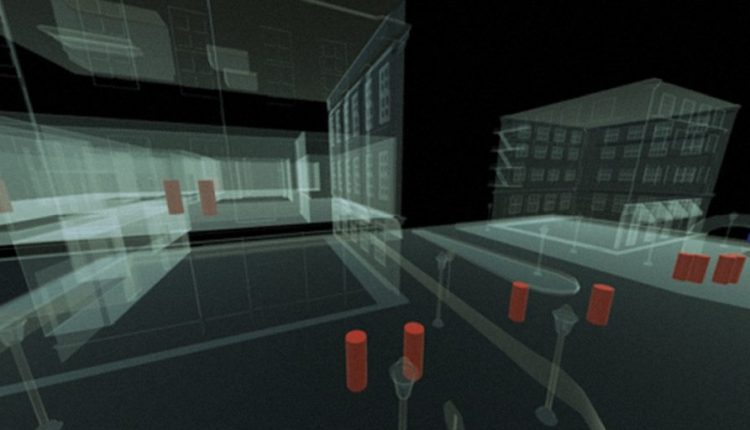

More broadly, Worlds says it plays in the growing extended reality (XR) market, where computers generate immersive experiences for physical worlds. Copps again used the example of an oil company like Chevron. When an employee is notified of something unusual, they can zoom in on the area in question. As Copps explained it, they could start with a “satellite view,” which might be enabled by satellites, or even drones flying over a property. The employee can then fly down around a specific property — almost like a drop-in from the popular game Fortnite, Copps said — and view it in 3D, even looking through the walls.

The XR market is expected to grow to more than $209 billion in the next four years, an eightfold increase from the $27 billion estimated in 2018, according to one report.

Copps said Worlds’ system is built on three levels of learning. First, is person to machine, where a person dictates what an object is by labeling it. In the second level, the machine takes over after it’s been trained — on just a few minutes of video — and starts teaching itself and automatically tagging objects. The third level is transfer learning, where the system can transfer learnings from one environment to another. For example, if a company is watching 50 different locations, and location number 37 identifies an unknown truck, the system can tag the truck, follow it around in the video, and then transfer that knowledge to the other environments. “The idea is to create these perpetually learning environments,” said Copps.

The company also leverages a layer of bots assigned for specific tasks, almost like virtual employees, Copps said. A security bot, for example, might be focused on a $100,000 generator and taught to send an alarm if the generator moves more than 30 feet. Another bot might detect people who are not authorized to be in a specific area and send an alarm or ring the person’s phone. The bots are reusable and can be duplicated for multiple environments, Copps said.